Difference between revisions of "General/What is Viper"

m |

m |

||

| Line 51: | Line 51: | ||

[[File:Viper-network.jpg]] | [[File:Viper-network.jpg]] | ||

| − | + | === Further Information === | |

* [[general/Batch|Batch Jobs]] | * [[general/Batch|Batch Jobs]] | ||

Revision as of 10:48, 23 February 2017

Contents

Introduction

Viper is the University of Hull's supercomputer and is located on site with its own dedicated team to administrate it and develop applications upon it.

A supercomputer is a computer with a high level of computing performance compared to a general-purpose computer. It achieves this level of performance by allowing the user to split a large job into smaller computation tasks which then run in parallel, or by running a single task with many different scenarios on the data across parallel processing units.

Supercomputers generally aim for the maximum in capability computing rather than capacity computing. Capability computing is typically thought of as using the maximum computing power to solve a single large problem in the shortest amount of time. Often a capability system is able to solve a problem of a size or complexity that no other computer can, e.g., a very complex weather simulation application.

The uses of supercomputers are wide spread and continue to be used in new and novel ways everyday. Viper is used for

- Astrophysics

- Bio Engineering

- Business School

- Chemistry

- Computer Science

- Computation Linguistics

- Geography

- and many more

Like just about all other supercomputers Viper runs on Linux, which is similar to UNIX in many ways and has a wide body of software to support it.

Viper Specifications

Physical Hardware

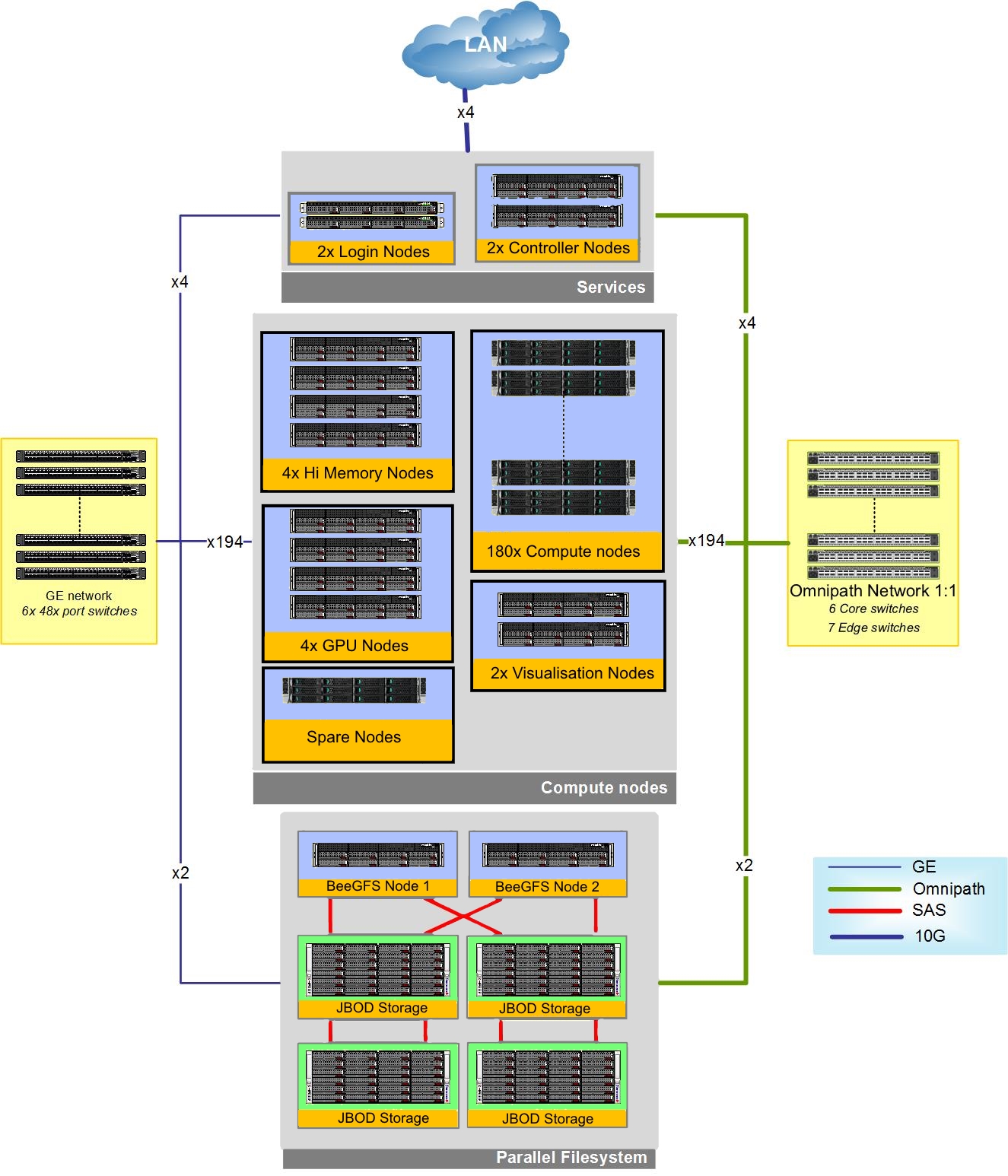

Viper is based around the Linux operating system and is composed of approximately 5,500 processing cores with the following specialised areas:

- 180 compute nodes, each with 2x 14-core Broadwell E5-2680v4 processors (2.4 –3.3 GHz), 128 GB DDR4 RAM

- 4 High memory nodes, each with 4x 10-core Haswell E5-4620v3 processors (2.0 GHz), 1TB DDR4 RAM

- 4 GPU nodes, each identical to compute nodes with the addition of 4x Nvidia Tesla K40m GPUs per node

- 2 Visualisations nodes with 2x Nvidia GTX 980TI

- Intel Omni-Path interconnect (100 Gb/s node-switch and switch-switch)

- 500 TB parallel file system (BeeGFS)

Infrastructure

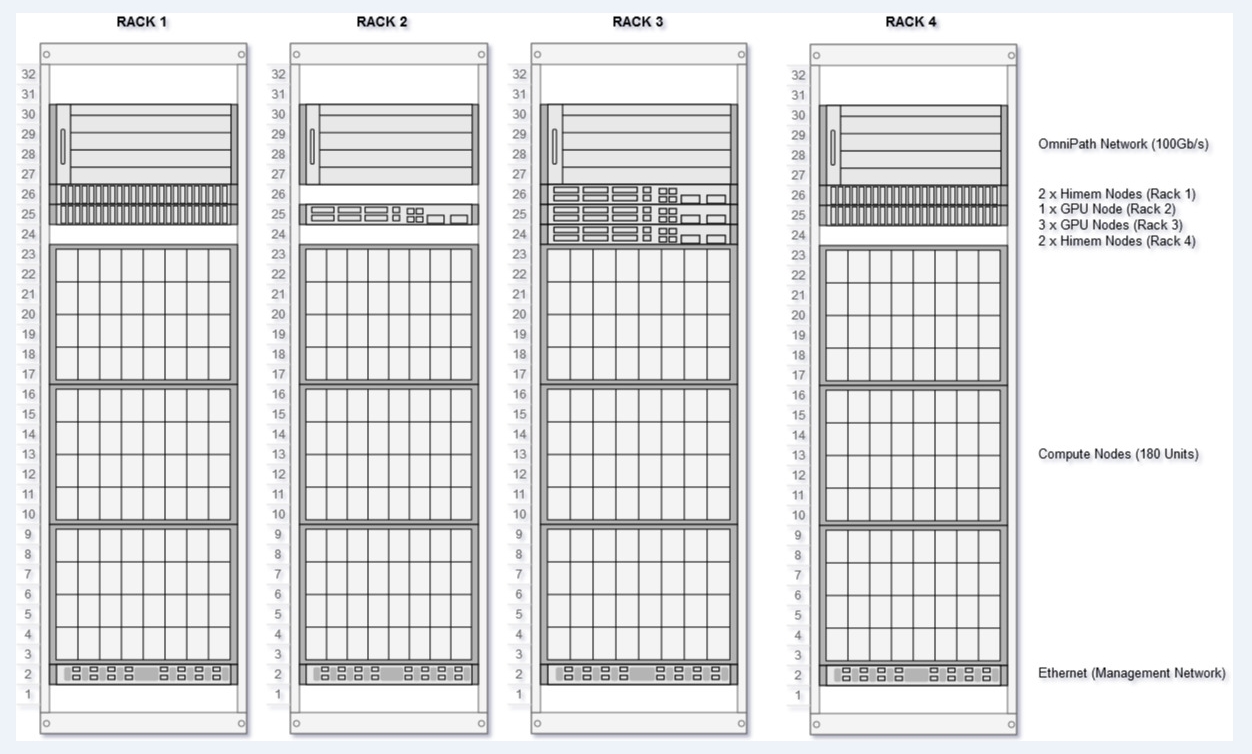

- 4 racks with dedicated cooling and hot-aisle containment (see diagram below)

- Additional rack for storage and management components

- Dedicated, high-efficiency chiller on AS3 roof for cooling

- UPS and generator power failover

Network Connectivity

The described infrastructure is connected in the follow way: